Misconception:

Astronomers can reliably calculate cosmological distances.

Answer:

Quantifying depth or radial distance from our point of observation is arguably the most daunting and pressing problem facing astrophysics. Location relative to Earth is a primary property of celestial objects, but almost the entire 3-D map of the observable universe is based not upon measurements but on unverifiable guesswork.

There are only two reliable, cross-checked ways to calculate remoteness over astronomical distances—radar and triangulation. Radar (bouncing a microwave signal off an object and analysing the echo) is constrained in practice to objects within the Solar System. Even if a radio transmitter were developed that was powerful enough and accurate enough to reach stars that are just 50 light years away, the turnaround time would be a hundred years!

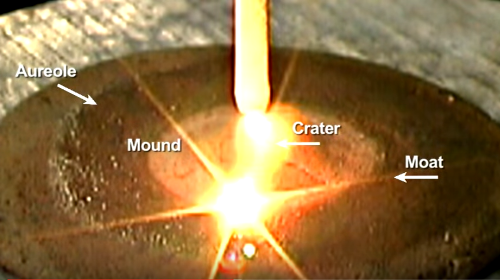

A diagram illustrating parallax as an astronomical distance measure. Credit: Wikimedia Commons.

Triangulation, specifically the special case of triangulation known as parallax, is a proven, reliable way of measuring distance. Trigonometry gives us the means to calculate the perpendicular distance from the base of an isosceles triangle to the apex, given the length of the baseline and the size of the angles at either end. The accuracy of triangulation depends critically upon the length of the baseline in proportion to the distance to the target, so as to avoid too narrow an angle at the apex. The diameter of the Earth’s orbit about the Sun provides a baseline that is 2 Astronomical Units long, giving an outer limit of accurate measurement of 300 light years. The Hipparcos satellite is currently pushing that out to 1000 LY.

Anything further is based upon the notion that we can identify so-called standard candles, which are classes of recognisable objects that all have the same level of intrinsic brightness. Two problems exist for any class of standard candle. The principal one is calibration, in other words, precisely determining the absolute magnitude of the candle. The second problem is establishing which objects qualify for membership of a particular class of candles. One of the stock methods for extra-galactic distance measurement is given by the rate of oscillation of stars known as Cepheid Variables. There is a definite correlation between the time taken for a variable star to fluctuate and how bright it appears to be. Unfortunately, improved instrumentation has subsequently shown that Cepheid Variables are in fact not a class of standard candles at all. Supernovae (exploding stars) are also invoked as standard candles, to measure distances appreciably greater than those apparently given by period-luminosity in variable stars. They have since been exposed as having non-standard intrinsic brightness.

In 1928, astronomer Edwin Hubble found what he initially thought was a systematic relationship between the spectral redshift of a sample of 23 Local Group galaxies and their apparent luminosity. The idea was that offshore galaxies were standard candles, and that their apparent luminosity was proportional to how far away they were. Galaxies too have been shown to have enormously varying brightness, and are useless as standard candles.

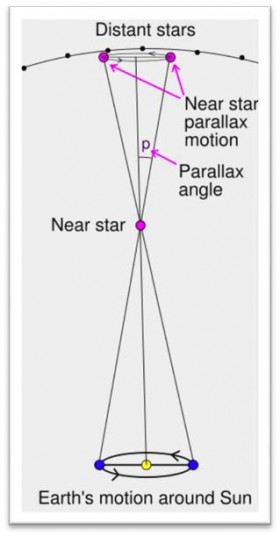

The extragalactic distance ladder. It is ultimately all dependent upon the arbitrarily obtained Hubble constant. Credit: Wikimedia Commons.

Each step on the distance ladder introduces further uncertainty. Would it not be better to use primary indicators to calculate galaxy distances, and thus remove the need for the treacherous distance ladder?

~Stephen Webb, Measuring the Universe

In short, the reality is that apart from the stars that lie fewer than 1000 light years radius of Earth, astrophysics has no accurate, verifiable measure of distance based upon a known benchmark value, with the appalling implication for cosmology that at this time, the structure, extent, and age of the Universe are impossible to determine with precision or certainty.

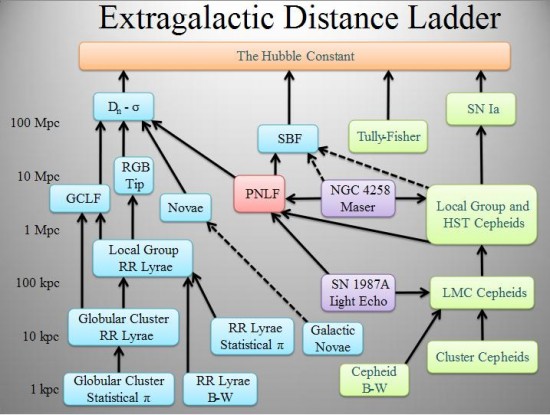

Because plasma structures are consistent and independent of scale, there is a possibility that plasma physicists may be able to establish a distance calibration that is solidly based and dependable across the whole span of the cosmos. That would revolutionise cosmology beyond recognition, and put electromagnetic plasma right at the frontier of astrophysics.