New Insights into the Holy Grail of Physics – or What Do Rabbits Have to Do with the Cosmos?

New Insights into the Holy Grail of Physics – or What Do Rabbits Have to Do with the Cosmos?

by Mathias Hüfner

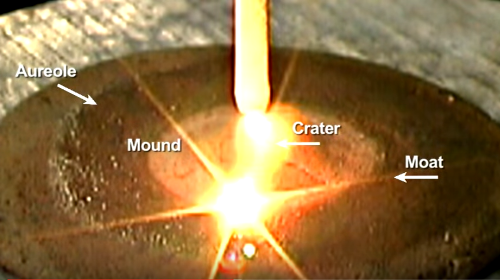

Abstract: This paper develops a unified physical model in which electrons are not point-like charges but real toroidal current structures with intrinsic rotation. The coupling of translational and rotational motion yields a Pythagorean velocity relation that extends from the electron to cosmic Birkeland currents and is structurally governed by the Golden Ratio. The classical interpretation of the Lorentz transformation is identified as a misconception: the spacetime interval does not describe a four-dimensional metric but orthogonal components of motion within three-dimensional space. Under strong electromagnetic fields, electrons contract to proton-scale toroidal structures, allowing nuclear forces to be interpreted as purely electromagnetic effects. The neutron emerges as a bound state of a proton and a contracted electron. Experiments such as SAFIRE support the possibility of nuclear transformations at moderate energies. Overall, the model leads to a simplified and more intuitive physics that unifies relativity, quantum theory, nuclear physics, and cosmology within a geometric–dynamic framework.

Introduction: The “Holy Grail of Physics” is commonly understood as a theory that describes the micro and macrocosm within a single unified framework. The first idea in this direction was proposed in 1956 by Hugh Everett III, a doctoral student of John Archibald Wheeler. In his dissertation The Theory of the Universal Wave Function, Everett introduced an interpretation of quantum mechanics that immediately met resistance, as it contradicted the then-dominant Copenhagen interpretation formulated largely by Niels Bohr. In 1957 Everett postulated “relative” quantum states. Physicist Bryce DeWitt later popularized this approach in the 1960s and 1970s under the name Many Worlds, referring to the different possible states of a quantum system after a measurement. His aim was to avoid the collapse of the wave function and to explain its apparent subjective collapse through the mechanism of quantum decoherence. This was intended to resolve the paradoxes of quantum theory such as the EPR paradox and Schrödinger’s cat—by asserting that every possible outcome and every event is realized in its own world.

In this essay, a wave function of the electron is presented that requires no interpretation, does not collapse, and whose real superpositions allow an extension across many orders of magnitude from the interior of the atom to Birkeland currents.

Click below to download Dr. Hüfner’s entire 9-page paper as a PDF document…

Dr. Mathias Hüfner is a German translator volunteer for The Thunderbolts Project. He studied physics from 1964 until 1970 in Leipzig Germany, specializing in analytical measurement technology for radioactive isotopes. He then worked at Carl Zeiss Jena until 1978 on the development of laser microscope spectral analysis. There he was responsible for software development for the evaluation of the spectral data. Later he did his doctorate at the Friedrich Schiller University in the field of engineering and worked there 15 years as a scientific assistant. Some years after the change in East Germany, he worked as a freelance computer science teacher the last few years before his retirement.

Since 2015, Mathias has run a German website of The Thunderbolts Project http://mugglebibliothek.de/EU.

His latest book is entitled Dynamic Structures in an Open Cosmos.

The ideas expressed in Thunderblogs do not necessarily express the views of T-Bolts Group Inc. or The Thunderbolts Project.